Introduction

Data teams are drowning. Analysts spend their days buried in SQL ticket backlogs, business stakeholders wait days for a single report, and expensive BI tools sit unused because they're too complex for anyone outside the data team to touch. Only 25% of employees actively use BI and analytics tools, and highly-skilled data scientists waste 83% of their time on tasks that could be automated—including 45% on data prep and 20% building dashboards.

Generative AI is changing this dynamic. It takes a plain-English question and returns a finished output—a SQL query, a chart, a written narrative—in seconds rather than days. Below are the use cases where this is already delivering measurable results, and the practices that determine whether an implementation sticks or stalls.

TLDR

- Generative AI automates data prep, query writing, and report generation—freeing analysts for strategic work

- Top use cases: natural language querying, automated pipeline generation, AI dashboards, scenario modeling, and anomaly detection

- Governed context and semantic layers must be in place before deploying generative AI on your data

- The most common failure: deploying AI on undocumented or inconsistent data—and getting confidently wrong answers at scale

What Is Generative AI in Data Analytics?

Generative AI differs fundamentally from traditional **descriptive and predictive analytics**. Rather than classifying patterns in existing data or running pre-built reports, it generates new artifacts — SQL queries, code, narratives, charts — from natural language instructions. Traditional BI tools require SQL expertise and manual dashboard construction. Generative AI removes both barriers.

The practical shift is immediate. Business users ask questions in plain English, get answers in seconds, and refine them conversationally — no data request ticket, no waiting on an analyst. This directly addresses one of the most persistent problems in enterprise analytics: low BI adoption among non-technical users.

The numbers back it up. Worldwide generative AI spending is expected to reach $644 billion in 2025, and enterprise usage jumped from 55% in 2023 to 75% in 2024. That acceleration reflects how quickly teams are replacing static dashboards with conversational analysis.

What this looks like in practice:

- Natural language queries replace SQL — anyone on the team can interrogate data directly

- On-demand reports eliminate analyst bottlenecks for routine data pulls

- Conversational iteration lets users refine results without starting over

- Auto-generated narratives turn raw query output into readable summaries

Top Use Cases for Generative AI in Data Analytics

Natural Language Querying and Self-Service Analytics

Natural language interfaces transform how users interact with data. A user types a question—"What were our top revenue-generating segments last quarter?"—and the AI interprets intent, generates the appropriate query, runs it against the data warehouse, returns a visualization, and writes a plain-language summary. All in seconds.

Accuracy depends heavily on whether the AI is grounded in a documented semantic layer. GPT-4o's execution accuracy plummets from 86% on academic benchmarks to just 6% on real-world enterprise schemas. When the system is anchored to defined business metrics and dbt models rather than generating raw SQL against undocumented tables, accuracy jumps to 92-99% with lower hallucination rates.

Sylus exemplifies this approach: it grounds all analysis in governed dbt models and documentation, ensuring the AI's answers are consistent with how the business actually defines its metrics. Cox 2M reduced time to actionable insights by 88% using natural language querying, and Act-On experienced over 60% higher customer report usage within 30 days of implementing AI-powered search.

Automated Data Preparation and Pipeline Generation

Generative AI writes ETL/ELT code, dbt models, and transformation logic from natural language descriptions of data needs. A user describes "pull yesterday's sales, join with inventory, flag stockouts," and the AI generates the code.

Data scientists spend about 45% of their time on data preparation tasks, including loading and cleaning data — automating this work reclaims nearly half of analyst capacity for higher-value insight generation. In a test of 50 real dbt modeling tasks, AI-generated dbt models worked correctly on the first try 76% of the time, with 22% needing only minor adjustments—demonstrating production-ready automation.

AI-Generated Dashboards and Narrative Reporting

Generative AI goes beyond auto-charting. It generates context-aware visualizations appropriate to the data type and writes analytical narratives that identify what changed, why it matters, and what action to consider. This contrasts sharply with traditional dashboards that show what happened but leave interpretation to the viewer.

60-70% of dashboards go unused, ending up in the "dashboard graveyard." Data storytelling elements improve single-insight comprehension and foster more accurate answers compared to conventional visualizations. AI-generated narratives cut through visual clutter, improving executive comprehension and decision speed.

Predictive Analytics and Scenario Modeling

Generative AI enables "what-if" scenario modeling at speed. Users define conditions—a recession scenario, a supply chain disruption—and the AI simulates multiple possible futures with demand projections, financial implications, and recommended responses. Work that previously required weeks of manual financial modeling now happens in minutes.

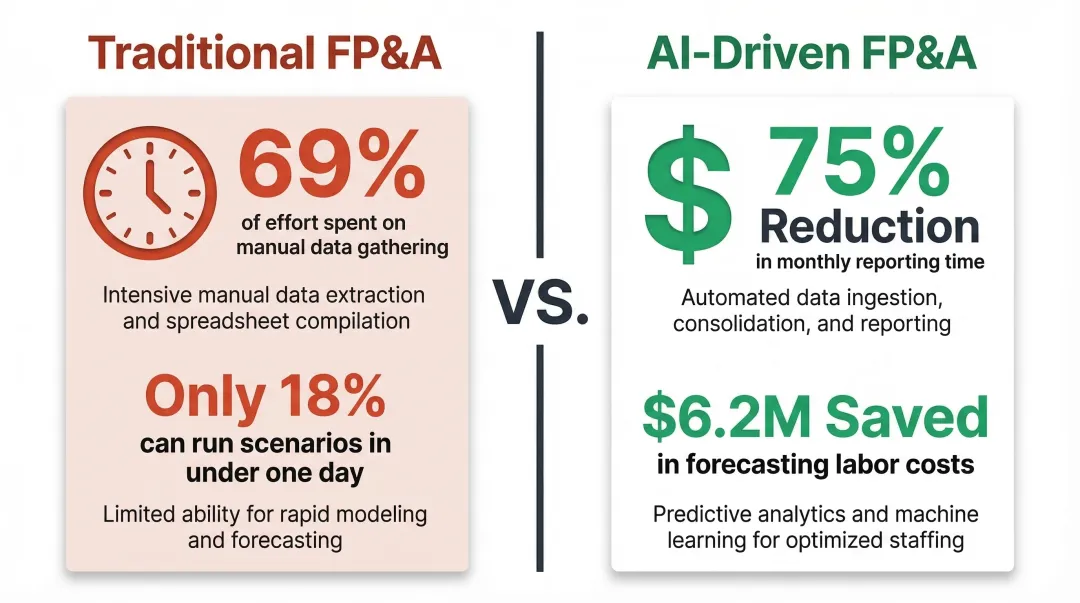

The productivity gap is steep:

- 69% of FP&A effort is tied up in manual data gathering and reporting, and only 18% of organizations can run scenarios in under one day

- OneStream's AI-driven forecasting cut FP&A team time on monthly reporting by 75%, saving $6.2 million in avoided forecasting labor and overtime

Anomaly Detection and Proactive Alerting

Generative AI learns baseline behavior patterns across metrics and flags meaningful deviations with probabilistic explanations rather than binary rule-based alerts. This reduces alert fatigue and supports proactive decision-making.

The scale of the problem is well-documented:

- 20-30% of Security Operations Center alerts go uninvestigated due to sheer volume overload

- 73% of organizations cite false positives as their top challenge in threat detection

Smarter anomaly detection changes this calculus. PayPal's deep learning fraud model blocks an estimated $2 billion in fraudulent transactions annually while cutting false positive rates by 50% — a direct result of moving from rule-based alerts to probabilistic, pattern-aware detection.

Best Practices for Implementing Generative AI in Analytics

Build a Semantic Layer and Governed Context Before Deploying AI

A semantic layer — mapping business-friendly metric definitions to underlying technical tables — is the foundational prerequisite for reliable AI outputs. Without it, the AI generates answers that are syntactically correct but semantically wrong.

Governed context means consistent metric naming, documented business definitions, and clear data lineage. Powering an LLM with governed semantic definitions via the dbt Semantic Layer improved accuracy by 3x in benchmark testing. dbt models and dbt documentation are an ideal foundation: when AI is grounded in tested, documented transformations, it inherits the logic your data team has already validated. This is the difference between an AI that confidently invents numbers and one that accurately answers business questions.

Resolve Data Quality Issues Before Scaling AI

Poor data quality doesn't produce slightly wrong outputs at AI scale — it produces confidently wrong outputs delivered at speed to every stakeholder. Poor data quality costs organizations an average of $12.9 million every year in wasted resources and lost opportunities.

Implement automated data quality monitoring — data tests, freshness checks, SLAs on key tables — before you scale AI into production. Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data. Getting data foundations right first is what separates successful deployments from stalled ones.

Maintain Human-in-the-Loop Validation

Run AI-generated insights in parallel with manual analysis during the first weeks of deployment to catch errors before they influence decisions. A feedback loop (where analysts flag incorrect outputs) progressively improves output reliability and builds organizational trust in the system.

This is especially critical during initial rollout. Teams that treat AI as a replacement for good data practices consistently underperform. Successful teams position AI as an accelerant on top of strong governance — not a workaround for weak foundations.

Apply Security and Privacy Guardrails From Day One

Sending sensitive business data to third-party LLM APIs creates compliance risk. Best practices include:

- Private or self-hosted model deployments to keep data off shared infrastructure

- Data anonymization pipelines before any data reaches an LLM

- Vendor agreements that explicitly prohibit training on customer data

Sylus is built with these requirements in mind: SOC 2 Type II and HIPAA compliant, available as a self-hosted deployment, and neither Sylus nor its model partners train on customer data. For regulated industries with strict data residency requirements, that level of specificity in vendor agreements matters.

Common Challenges to Anticipate

Three friction points derail most generative AI analytics rollouts before they deliver value.

Hallucination in Business Reporting

AI models can generate plausible-sounding but fabricated metrics, trends, or correlations — and decisions get made on invented numbers. Practical detection methods include cross-validation against known benchmarks, anomaly checks on AI outputs, and mandatory human review for high-stakes reports.

The Data Governance Gap

Organizations routinely rush to deploy conversational analytics before their data catalog, metric definitions, or naming conventions are clean. 77% of organizations rate their data quality as average or worse, a figure that worsens as data complexity grows. Teams that treat AI as a substitute for sound data practices — rather than an accelerant built on top of them — consistently underperform.

Organizational Change

Generative AI shifts the analyst role from query writer and report builder to insight validator and data model steward. Adding an AI tool without restructuring workflows rarely moves the needle. Teams that succeed redefine responsibilities and build validation skills before they scale adoption.

How to Choose the Right Generative AI Analytics Tool

Evaluate tools on four key criteria:

- Semantic layer grounding — Are NL answers grounded in a documented semantic layer or do they generate raw SQL against bare tables?

- Security and compliance posture — SOC 2, HIPAA, self-hosted availability

- Integration with existing data stack — Warehouse connectors, dbt compatibility, Slack/email delivery

- Pricing model — Per-seat licensing vs. usage-based

How a tool scores on those criteria depends largely on its architecture. The market has split into two camps: embedded AI copilots bolted onto existing BI tools (faster to adopt, but constrained by legacy structure) and purpose-built AI analytics platforms built for governed, conversational workflows. Embedded copilots extend dashboards you already have; standalone platforms support agentic workflows where AI runs multi-step analyses without hand-holding.

Before committing to a vendor, these three questions cut through most of the noise:

- Does the tool ground answers in documented metric definitions, or generate ad hoc SQL?

- Can it be self-hosted for teams handling sensitive or regulated data?

- Does the vendor — or its model partners — train on your company's data?

The last two matter more than most buyers expect. Platforms like Sylus offer self-hosted deployment and a firm policy against training models on customer data, which is often the deciding factor for regulated industries like healthcare and finance.

Frequently Asked Questions

Can you use generative AI for data analytics?

Yes. Generative AI enables natural language querying, automated report generation, code writing, and AI-driven insights without requiring SQL expertise. It transforms how business users access and interact with data.

Which generative AI is best for data analytics?

No single tool fits every team. The right choice depends on your data stack, compliance requirements, and whether you need a copilot embedded in an existing BI tool or a purpose-built governed analytics platform.

What is a data analyst with generative AI?

The analyst role shifts away from manually writing queries and building reports. Analysts focus instead on designing governed data models, validating AI-generated outputs, and strategic interpretation of results.

What are the 4 types of data analytics?

Descriptive (what happened), diagnostic (why it happened), predictive (what will happen), and prescriptive (what should we do) analytics. Generative AI enhances all four by automating analysis and generating outputs across each type.

How do I know if my data is ready for generative AI analytics?

Look for three readiness signals: documented metric definitions and a semantic layer are in place, automated data quality monitoring is active, and analysts spend less than 30% of their time on data cleaning.