Introduction

Manual reporting is breaking down. As data volumes grow and clients expect real-time visibility, agencies and data teams are spending more hours compiling reports and fewer hours acting on them.

Automated client reporting fixes this — technology continuously collects data from multiple sources, processes it, and delivers structured performance reports with minimal manual effort. For agencies, data teams, and client-facing business units in 2026, it's no longer optional.

Modern organizations manage an average of 275 SaaS applications, while the marketing technology landscape alone has exploded to over 13,000 distinct tools. Client data is more fragmented than ever, scattered across ad platforms, CRMs, analytics tools, and databases. Manual aggregation across this sprawl is mathematically impossible to scale.

This guide covers what AI-enhanced automated reporting is, how it works end-to-end, what separates AI-driven reporting from basic scheduling tools, and the practical factors that determine whether it actually delivers reliable results.

TL;DR

- Automated client reporting replaces manual data collection with systems that pull, process, and deliver insights on schedules or triggers

- Natural language summaries, anomaly detection, and ad-hoc Q&A — AI handles these without analyst intervention

- Success depends on connected data sources, governed data layers, and clear KPI definitions before automation runs

- Most failures trace back to dirty source data, over-automation without human review, or ungoverned AI analysis

- Sylus pairs automated delivery with AI analysis grounded in your dbt models, so reports are fast and verifiably accurate

What Is Automated Client Reporting?

Automated client reporting is a system that connects to live data sources, applies predefined logic or AI analysis, and generates formatted performance reports for clients—either on schedule or on demand—without requiring manual data compilation each cycle.

The outcome: clients receive consistent, accurate, and timely visibility into performance metrics, while internal teams shed the repetitive work of pulling data and reformatting it each cycle.

It's worth distinguishing this from two things it's often confused with:

- Dashboards are passive — clients have to go check them. Automated reporting pushes insights to clients on a schedule.

- Data warehouses store raw data but don't generate formatted deliverables or narrative summaries.

AI-enhanced reporting takes this further by adding interpretation on top of automation: flagging anomalies, generating written summaries, and answering questions without human authoring.

Why AI-Enhanced Reporting Is a Game-Changer for Client-Facing Teams in 2026

Why AI-Enhanced Reporting Changes the Value Equation for Client-Facing Teams in 2026

Five forces are reshaping how client-facing teams report in 2026 — and manual processes can't keep up with any of them.

The Scale Problem

Modern clients typically have data spread across five to fifteen platforms. The average company now manages 275 SaaS applications, while the marketing technology landscape encompasses 13,080 distinct products—a 7,258% growth over twelve years. Manually unifying this data for reporting is no longer viable.

AI Changes the Value Proposition

Traditional automation only assembles and delivers data. AI-enhanced reporting identifies why a metric changed, flags patterns requiring attention, and generates plain-language summaries non-technical clients can act on—without an analyst writing each one manually.

Client Retention and Trust

Consistent reporting demonstrably reduces churn. Agencies using structured, automated white-label reporting see up to 40% reduction in client churn, aligning with the 96% of buyers who demand transparency to maintain loyalty. In tight economies, retaining existing clients is far less costly than acquiring new ones — and the quality of reporting is one of the clearest signals of account health clients can see.

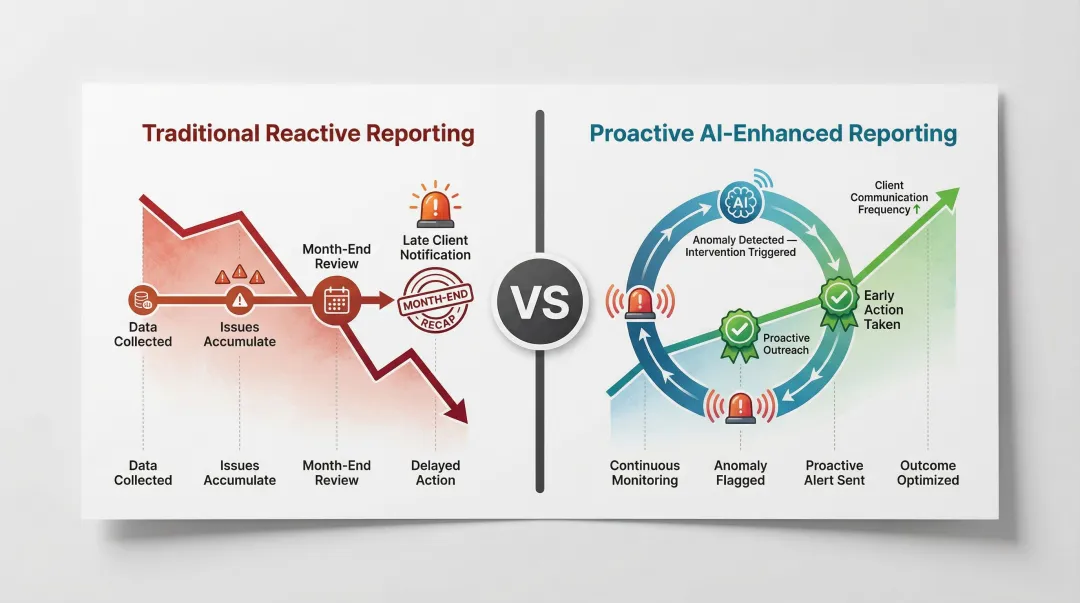

Reactive to Proactive Reporting

AI alert systems notify teams when performance crosses thresholds—before the client notices. This shifts reporting from a monthly recap into an ongoing communication layer. Instead of explaining what went wrong after the fact, account teams can surface issues, offer context, and recommend next steps while there's still time to act. Clients receive proactive updates rather than post-mortem recaps — a distinction that directly shapes how they perceive the value of the relationship.

The 2026 Difference: Governed Context

Modern platforms ground AI analysis in verified data definitions—such as dbt models and documentation. When the AI answers "what drove the spike in CAC last week," it uses the same definition of CAC the team and client agreed on, not a hallucinated interpretation. dbt Labs now serves over 50,000 teams weekly, with 80% of data practitioners using AI in their workflows but relying on semantic layers for accuracy.

How AI-Enhanced Client Reporting Works

Raw data from connected sources enters a unified data layer, is validated against governed definitions, processed into metrics, then passed to an AI layer that generates visualizations, written summaries, and anomaly flags. These are packaged and delivered via scheduled email, Slack, embedded dashboard, or on-demand query.

Three layers feed into every report:

- Connects ad platforms, CRMs, databases, and data warehouses into a single source

- Applies governed context — documented business logic and dbt models — to define what metrics mean

- Uses report templates to control what the AI analyzes and surfaces for each client

The AI layer doesn't just assemble numbers. It interprets metric movement, compares against historical baselines, validates assumptions against the governed data model, and generates narrative output. Platforms like Sylus ground this analysis in dbt models and documentation, so the AI operates within definitions the data team has already approved — not unchecked assumptions.

Reports can be scheduled (daily, weekly, monthly), triggered by anomaly alerts, or generated on demand via natural language queries. Distribution options include email, Slack, shareable links, or embedding directly into client portals.

Clients move from receiving a static PDF at month-end to continuous access to governed, AI-interpreted performance data — with the ability to ask follow-up questions and receive validated answers in seconds. Here's how that pipeline works in practice.

Step 1: Connect and Govern Your Data Sources

Before any automation runs, connect all relevant data sources to a centralized data layer and define the governed context—the documented business definitions, metric logic, and dbt models—that the AI will use as its source of truth. Without this step, AI-generated summaries may be fast but unreliable. This foundation phase determines the accuracy of everything downstream.

Step 2: Define Report Templates, KPIs, and Delivery Rules

With governed data in place, configure what each client's report will contain: the KPIs that matter to their business outcomes, visualization formats, delivery schedule, and who receives which version. This is also where alert thresholds are set—defining what constitutes an anomaly worth flagging versus normal variance.

Step 3: Review, Customize, and Distribute

Once the automated system generates a report or alert, a designated team member reviews the AI-generated summary for accuracy and adds strategic commentary where relevant before final delivery. This human review step—even if brief—separates trusted reporting from noise. The reviewer's role shifts from data compiler to analyst and advisor.

Key Factors That Shape Reporting Quality

Data Source Quality and Completeness

If upstream data has gaps, duplicates, or inconsistent event definitions across platforms, no amount of AI processing will produce a trustworthy report. Data validation and cleansing at ingestion is where reporting quality is either earned or lost.

Governed Context and Metric Alignment

The most common cause of conflicting numbers in automated reports is that different systems define the same metric differently (for example, "conversion" in Meta vs. in the CRM). A governed data layer—such as one built on dbt models—ensures all AI analysis and visualizations reference the same agreed-upon definitions.

| Architecture Feature | Traditional BI Reporting | Governed Semantic Layer (e.g., dbt) |

|---|---|---|

| Metric Definition Location | Siloed within individual BI tools | Centralized in data modeling layer (metrics-as-code) |

| Version Control | Often non-existent; changes overwrite logic | Git-based version control with branching and CI/CD testing |

| AI Readiness | High risk of AI hallucinations due to lack of context | Provides machine-readable metadata, relationships, and guardrails |

Security and Compliance Requirements

For agencies and enterprises handling sensitive client financial, health, or behavioral data, the reporting platform must meet contractually required security standards. Look for platforms that are SOC 2 Type II certified and HIPAA compliant, offer self-hosted deployment options, and do not use customer data to train AI models. Sylus meets all three requirements by design.

Reporting Frequency and Audience Matching

Operational teams may need daily metric digests while executives need monthly narrative summaries. Mismatching report depth and frequency to the audience reduces engagement and increases the volume of manual clarification requests—which is exactly what automation is supposed to eliminate.

Common Mistakes and When Automated Reporting Falls Short

The Setup Trap: Assuming Connection Equals Completion

Most teams assume that connecting a reporting tool and scheduling delivery is enough. In practice, automation doesn't fix bad data — it scales it. When reports run on poorly governed or uncleaned sources, incorrect numbers circulate with more consistency than the manual errors they were meant to replace.

Poor data quality costs organizations an average of $12.9 million annually. Automation amplifies whatever is in the data layer, good or bad.

The Over-Automation Risk: Removing Human Review

Cutting the analyst out entirely is the most common over-automation mistake. AI-generated summaries can mischaracterize why a metric changed when underlying event logic is ambiguous, or fail to distinguish a genuine performance issue from a tracking anomaly. A brief review before delivery catches these cases and maintains client trust.

A 2026 Liquibase report found that while 96.5% of organizations allow AI to interact with production databases, only 28.1% enforce standardized governance, leading 64.3% to suffer data quality failures.

When Automation Isn't Enough

Recurring reports are a natural fit for automation. High-stakes moments are not. Raw automated output falls short in situations like:

- A major campaign pivot requiring strategic reframing

- A performance decline that needs root cause analysis

- A budget expansion pitch where narrative and judgment matter

Automated reporting handles the cadence. Deliberate, human-authored analysis handles the moments that affect client relationships and decisions.

Conclusion

AI-enhanced automated client reporting replaces the manual cycle of data collection and delivery with a governed, AI-interpreted workflow that gives clients consistent visibility while freeing internal teams to focus on strategy rather than production.

The value of AI-enhanced reporting is not achieved by simply turning on a scheduling tool. It requires:

- A data governance foundation with approved definitions

- Clear KPI definitions aligned across teams

- Appropriate delivery configurations for each client

- A human review layer before reports go out

Teams that treat automation as a foundation to build on — rather than a destination in itself — get the most durable results.

Platforms like Sylus demonstrate this approach by grounding AI analysis in dbt models and documentation, ensuring that automated insights operate within the same data definitions the data team has already approved. When automation speed is paired with that kind of governance, reports stop being a liability and start being a reliable signal clients can act on.

Frequently Asked Questions

What are the 4 types of reports?

The four main categories are operational, financial, analytical, and marketing/campaign reports. Automated systems can generate all four from connected data sources, with operational reports using real-time granular data while analytical reports aggregate historical data for forecasting.

Can ChatGPT create a report?

ChatGPT can draft report structures and narratives from data you provide it, but it lacks native data connections and governance—making it useful as a writing assistant but not a replacement for a purpose-built reporting platform. Dedicated platforms connect directly to live data sources and validated business definitions, delivering accuracy that conversational AI cannot replicate on its own.

What are the 4 pillars of automation?

The four pillars are data collection, processing, output generation, and distribution. AI-enhanced reporting adds a fifth — interpretation — where the system explains what the data means through natural language summaries and anomaly detection.

How is AI-enhanced reporting different from traditional automated reporting?

Traditional automation handles scheduling and formatting, while AI-enhanced reporting adds natural language query, anomaly detection, AI-generated narrative summaries, and the ability to validate analysis against governed data definitions. The difference is between delivering numbers on schedule versus delivering interpreted insights grounded in verified business logic.

How do I ensure data accuracy in automated client reports?

Implement data validation at ingestion, use a governed context layer (such as dbt-documented metric definitions), and maintain a human review step before reports are distributed to clients. Research shows that using a semantic layer leads to significantly higher accuracy in natural language AI queries, preventing the conflicting definitions that commonly plague automated reporting.

Is automated client reporting secure enough for sensitive business data?

Security depends on the platform. Look for SOC 2 Type II and HIPAA compliance, confirm the vendor does not train AI models on your data, and check whether self-hosted deployment is available for the highest-risk use cases. Sylus, for example, meets all three criteria.