Introduction

Most organizations are running AI experiments in 2025. Far fewer are seeing results that show up in the numbers. For data teams, that gap is where the real opportunity lives.

The most cited 2025 AI research—McKinsey's Global Survey and OpenAI's State of Enterprise AI report—points to a clear disconnect: 88% of organizations now use AI in at least one business function, yet only about one-third have begun scaling it across the enterprise. Most are stuck in pilot mode, unable to turn early experiments into measurable business value.

This post breaks down the most critical AI reports and insights of 2025, covering:

- Where adoption actually stands and what's working

- How AI is generating real value in data and reporting workflows

- What separates high performers from everyone else

- How data teams can act on these findings today

TLDR

- 88% of organizations use AI, but only 30% have reached scaling phase—most remain stuck in pilot mode

- Workers save 40-60 minutes daily using AI, with data roles seeing 60-80 minutes saved

- High performers redesign workflows from scratch rather than layering AI on top of broken processes

- Inaccuracy tops the list of AI risks—governed, validated analytics are essential for scaling safely

The State of AI Adoption in 2025: Widespread But Not Yet Deep

Adoption Is Broad, But Scaling Remains Elusive

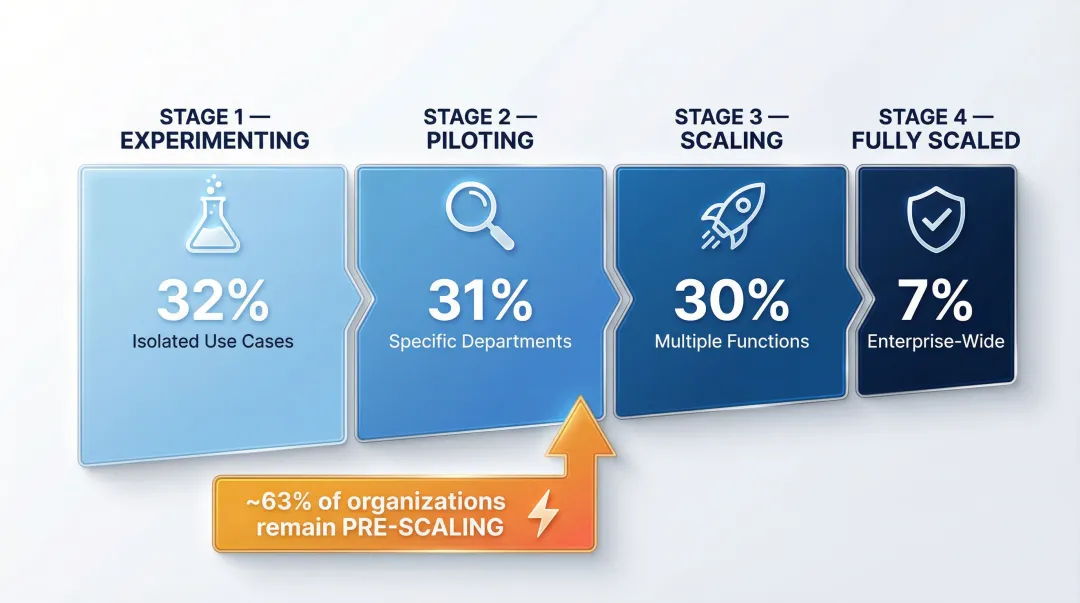

According to McKinsey's 2025 Global Survey, 88% of organizations report regular AI use in at least one business function, up from 78% in 2024. This represents significant year-over-year growth, but the distribution reveals a critical bottleneck:

- 32% are experimenting with AI in isolated use cases

- 31% are piloting AI in specific departments

- 30% are scaling AI across multiple functions

- 7% have fully scaled AI enterprise-wide

The pilot trap: approximately two-thirds of organizations have not yet begun scaling AI across the enterprise. They're stuck testing, iterating, and running proofs of concept without capturing systematic value.

The Rise of Agentic AI

Beyond traditional AI applications, 62% of survey respondents say their organizations are experimenting with AI agents—autonomous systems that can execute multi-step tasks with minimal human intervention. However, only 23% report scaling at least one agent, and no more than 10% of respondents report scaling agents in any single business function.

Where agentic AI is taking hold:

- IT operations and infrastructure management

- Knowledge management and documentation

- Customer service and support automation

These functions share a common trait: the tasks are repetitive, rule-bound, and well-documented enough for autonomous execution to succeed at scale.

The Size Gap: Larger Companies Lead in Scaling

Nearly half of respondents from companies with more than $5 billion in revenue have reached the scaling phase, compared with just 29% of those with less than $100 million in revenues.

This disparity matters enormously for fast-growing startups and mid-market data teams. Smaller organizations face resource constraints, less mature data infrastructure, and fewer dedicated AI roles—making it harder to move from pilot to production. Staying in experimentation mode carries a real cost: larger competitors are already compounding the gains from production-grade AI deployment.

Usage Depth Is Increasing, Not Just Breadth

What that scaled deployment actually looks like is visible in OpenAI's enterprise data, which shows a fundamental shift from casual querying to integrated, repeatable processes:

- Weekly ChatGPT Enterprise messages grew ~8x since November 2024

- ChatGPT Enterprise seats grew ~9x year-over-year

- Weekly users of Custom GPTs and Projects grew ~19x year-to-date

- ~20% of Enterprise messages now occur via Custom GPTs or Projects

That 19x growth in structured workflow features signals a real behavioral shift. Organizations are codifying institutional knowledge into persistent, reusable AI tools—not just asking one-off questions.

Teams are building Custom GPTs for specific business processes, organizing workflows into Projects, and embedding AI into daily operations. The experimentation phase, for these users, is over.

AI in Data Reporting and Analytics: Where Real Value Is Being Captured

The Productivity Proof Point

Workers report saving 40-60 minutes per active day using ChatGPT Enterprise, with data science, engineering, and communications roles seeing 60-80 minutes saved. For data analysts who historically spend significant time on manual report preparation, query writing, and data wrangling, those hours compound quickly into meaningful capacity gains.

What this means in practice:

- Less time writing SQL queries for ad-hoc requests

- Faster report generation and insight summarization

- More time for strategic analysis instead of data preparation

- Ability to handle higher volumes of business questions without adding headcount

Where Revenue and Cost Impact Occur

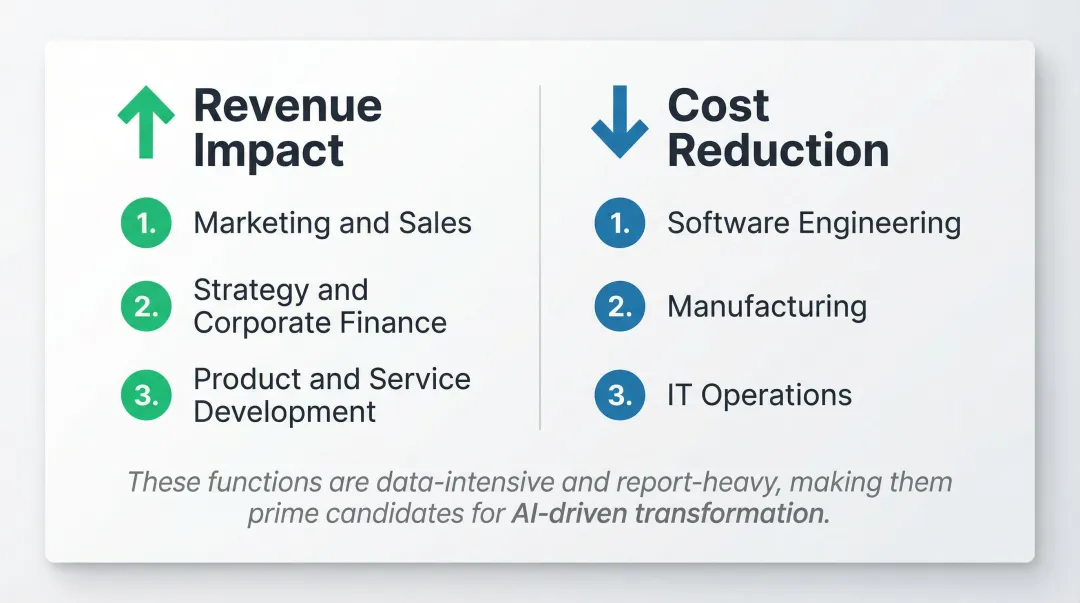

McKinsey's research reveals that AI value capture is highly concentrated in specific functions:

Revenue increases most commonly reported in:

- Marketing and sales

- Strategy and corporate finance

- Product and service development

Cost decreases most commonly reported in:

- Software engineering

- Manufacturing

- IT operations

These functions share a common trait: they're data-intensive and report-heavy. Marketing teams need campaign performance analytics. Finance teams require forecasting and variance analysis. Software engineering teams track velocity and quality metrics. Each one runs on data — and each one benefits when that data is faster to access and easier to interpret.

From Answering Questions to Exploring Data

Perhaps most significantly, 75% of workers report being able to complete tasks they previously could not perform—including coding, spreadsheet automation, and agent design.

In the analytics context, this translates to non-technical business users querying data without needing SQL or a dedicated analyst. A sales manager can ask "Show me customer retention by segment" and receive a visualization in seconds. A marketing director can request "Compare campaign ROI across channels" without submitting a ticket to the data team.

Analytics teams shift roles as a result: less time fielding requests, more time building the governed, AI-accessible data systems that let others self-serve.

The Reporting Automation Opportunity

AI report generation is moving from static dashboards to dynamic, AI-generated summaries and insights. According to Gartner, 75% of new analytics content will be contextualized for intelligent applications through generative AI by 2027, and over 50% of organizations already use AI tools for automated insights and natural language queries.

The shift is already visible in practice. Instead of manually building dashboards and writing commentary, teams are generating complete reports — visualizations, insights, and recommendations — through AI systems that understand their business context.

Grounded AI Analytics in Practice

Platforms like Sylus demonstrate what this shift looks like in practice. Rather than generic AI outputs disconnected from business context, Sylus acts as an AI data analyst grounded in your organization's own data models via dbt integration. Teams can ask questions in plain English—"What were total sales for each sales rep from the last 12 months?"—and receive validated, governed insights that reflect actual business definitions, not hallucinated metrics.

That means AI-generated reports align with how your organization actually defines revenue, customers, churn, or any other metric — because the AI works within the validated data structures your team has already built, not around them.

What AI High Performers Do Differently

McKinsey defines "AI high performers" as organizations where more than 5% of EBIT and measurable business value is attributable to AI use. This elite group represents only about 6% of survey respondents—but their practices offer a blueprint for scaling success.

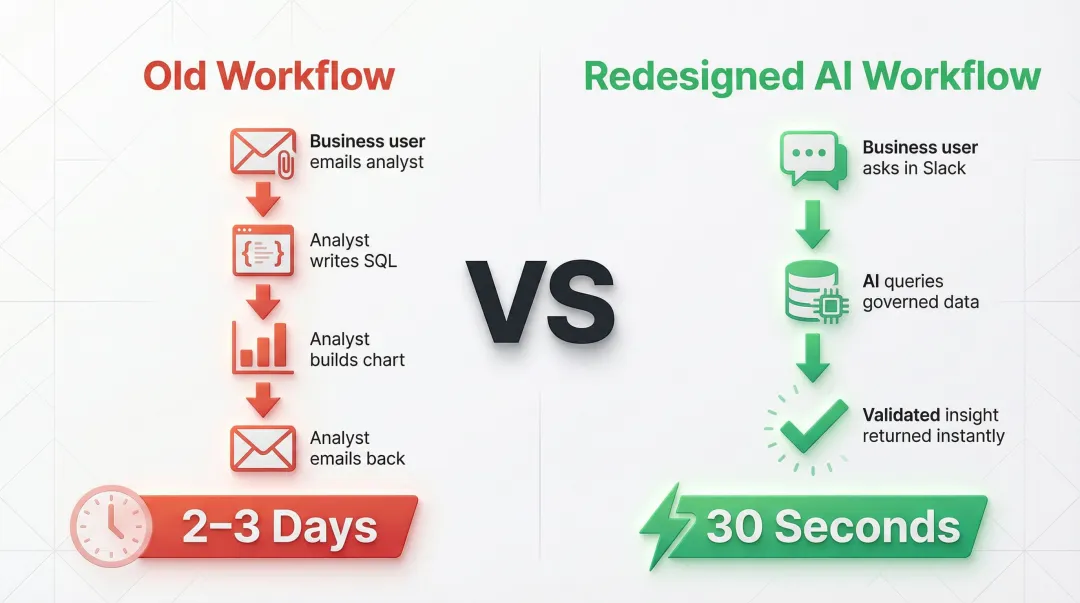

Workflow Redesign From the Ground Up

High performers are nearly three times as likely to have redesigned individual workflows from scratch (55% vs. 20% for all others). They don't layer AI on top of broken processes — they rebuild how work flows.

Example in analytics:

- Old workflow: Business user emails analyst → analyst writes SQL → analyst builds chart → analyst emails back (2-3 days)

- Redesigned workflow: Business user asks question in Slack → AI queries governed data → validated insight returned instantly (30 seconds)

The difference isn't just speed—it's structural. The redesigned workflow eliminates handoffs, reduces errors, and scales without adding headcount.

The Ambition Gap: Growth vs. Efficiency

High performers pursue fundamentally different objectives:

| Objective | High Performers | All Others |

|---|---|---|

| Efficiency | 84% | 80% |

| Growth | 82% | 50% |

| Innovation | 50% | 14% |

While nearly everyone uses AI for efficiency, high performers are 32 percentage points more likely to pursue growth objectives and 36 points more likely to pursue innovation objectives. They're not just cutting costs—they're using AI to enter new markets, launch new products, and reshape their business models entirely.

Leadership Ownership and Investment

High performers benefit from massive executive buy-in:

- 48% strongly agree that senior leaders demonstrate ownership of AI initiatives (vs. 16% for others)

- ~33% invest more than 20% of their digital budgets on AI

This top-down commitment translates into faster decision-making, more resources for experimentation, and organizational permission to redesign workflows without friction. Organizations where the C-suite owns AI outcomes — not just IT — are the ones closing the gap between pilot and production.

The Risks and Roadblocks Slowing Teams Down

Overall, 51% of respondents from organizations using AI say their organizations have seen at least one instance of a negative consequence in the past year. Understanding these risks is critical for data teams deploying AI analytics.

Inaccuracy: The Most Common Risk

Nearly one-third of all respondents report consequences stemming from AI inaccuracy—making it the most commonly experienced risk. For data and analytics teams, this is especially consequential. Wrong numbers in a report can drive poor business decisions, misallocate resources, or damage stakeholder trust.

Why inaccuracy happens in analytics:

- AI hallucinates metrics that don't exist in the data

- Calculations use incorrect business logic

- Data sources are misinterpreted or joined incorrectly

- Temporal logic errors (wrong date ranges, incorrect aggregations)

Inaccuracy is also one of the most commonly mitigated risks. Organizations are actively implementing validation workflows, human review processes, and governed data frameworks to address this challenge.

The Explainability Gap

While explainability ranks as the second-most-commonly-reported risk, it remains largely unmitigated—revealing a dangerous governance gap.

For data teams, this means users may receive AI-generated insights without understanding:

- How a metric was calculated

- Which data sources were used

- What assumptions were made

- Whether the calculation aligns with business definitions

This lack of transparency undermines trust and makes it difficult to debug errors or validate results. Teams using AI for data analysis need to trace how a metric was calculated and trust the underlying logic—without that, every output carries unquantifiable risk.

Organizational Readiness, Not Technology

The models are capable. The bottleneck is change management: adopting new workflows, establishing governance frameworks, training users, and building organizational muscle memory around AI-augmented processes.

For data teams specifically, this requires cross-functional collaboration, stakeholder buy-in, and a cultural shift in how organizations access and interpret data—none of which a model can provide on its own.

How Data Teams Can Start Acting on These Insights Today

Audit Your Pilot Trap

McKinsey's research shows that two-thirds of organizations are stuck in experimentation or piloting phases. Identify which AI use cases are trapped in testing and pick one or two to genuinely redesign around—rather than adding AI as a layer on top of existing manual workflows.

Questions to ask:

- Which AI experiments have shown promise but haven't scaled?

- What workflow redesign would be required to move from pilot to production?

- What organizational barriers (approval processes, governance concerns, budget) are blocking scaling?

Once you've identified what to scale, the next question is what it scales on. That foundation is governance.

Adopt a Governance-First Approach

Teams moving from pilot to scale need AI grounded in verified, trusted business definitions. This means:

- Codify how revenue, churn, LTV, and other key metrics are calculated—tools like dbt make this auditable and shareable

- Define who owns which data, what quality standards apply, and how changes get approved

- Ensure AI-generated reports reflect actual business logic rather than statistical approximations

Sylus takes this approach by grounding all analysis in your dbt models and documentation, so generated reports align with your organization's validated definitions rather than inferred ones.

With governance in place, you have the baseline you need to actually measure what AI is delivering.

Measure AI Impact at the Function Level

Before claiming enterprise value, track specific metrics that prove AI impact:

Metrics to track:

- Time saved per report (baseline vs. AI-assisted)

- Reduction in ad-hoc analyst requests

- Faster time-to-insight for business users

- Increase in self-service data access

- Reduction in report errors or revisions

This function-level measurement builds an internal business case for scaling AI analytics. When you can show that AI reduced report generation time by 75% or cut ad-hoc requests by 40%, budget approvals and organizational buy-in follow.

Frequently Asked Questions

What percentage of companies are actually using AI in 2025?

88% of organizations now use AI in at least one business function, up from 78% in 2024. However, only about one-third have begun scaling AI enterprise-wide—most remain in experimentation (32%) or piloting (31%) stages.

What is the biggest challenge stopping companies from scaling AI?

The primary barriers are organizational readiness and workflow redesign—not model capability or tooling. Most organizations have not yet embedded AI deeply enough into their processes or redesigned workflows to realize measurable financial impact.

How much time can AI actually save in data reporting and analysis?

Workers report saving 40-60 minutes per active day using AI, with data science and engineering roles seeing 60-80 minutes saved. The largest time gains typically occur in report generation automation, data querying, and insight summarization.

What do AI high performers do differently from average organizations?

High performers separate themselves across four key behaviors:

- Redesign workflows end-to-end (55% vs. 20% of average organizations)

- Pursue growth and innovation goals, not efficiency alone

- Secure strong senior leadership ownership (48% vs. 16%)

- Invest more than 20% of digital budgets on AI

What are the top AI risks for data and analytics teams?

Inaccuracy is the most commonly experienced risk, with nearly one-third of organizations reporting real consequences. Explainability is widely flagged but undermitigated. For analytics teams, the core mitigation strategy is governance: grounding AI analysis in verified business definitions through frameworks like dbt documentation.