Introduction

Organizations today face a critical paradox: they're collecting more data than ever, yet most of it never informs a single decision. According to Seagate's 2020 Rethink Data report, 68% of enterprise data goes unleveraged—collected, stored, and ultimately ignored. Meanwhile, business leaders continue making critical decisions by gut instinct rather than insight.

The problem isn't lack of data—it's lack of access. Data teams spend 80% of their time preparing data rather than analyzing it, creating massive bottlenecks. Business users wait days for answers to simple questions, and by the time insights arrive, the moment for action has often passed.

AI-driven data intelligence platforms are designed to break this bottleneck—by giving business users direct access to governed, trustworthy analytics without adding pressure on data teams. This guide covers what defines a modern data intelligence platform, how AI is shifting analytics from reactive reporting to proactive intelligence, and what to look for when evaluating platforms.

What Is a Data Intelligence Platform?

A data intelligence platform is an integrated system that uses AI and machine learning to collect, process, and surface actionable insights from an organization's diverse data assets. Unlike traditional data management tools that simply store and move data, or business intelligence tools that visualize historical reports, data intelligence platforms actively understand how data is produced, how it's used, and what it means in business context.

IBM defines data intelligence as combining "core data management and metadata management principles with advanced tools—such as artificial intelligence and machine learning—to help organizations understand how enterprise data is produced and used." In practice, this means data stops being something you warehouse and starts being something you interrogate.

Five Foundational Capabilities

Most data intelligence platforms share five core capabilities that work together to transform raw data into strategic assets:

- Data Integration: Connects databases, cloud warehouses, CRMs, ERP systems, and spreadsheets into a unified environment — reducing manual pipeline maintenance through broad out-of-the-box integration support.

- Metadata and Cataloging: Tracks how data is structured, documented, and used across the organization. According to Gartner's research, active metadata management can "decrease time to delivery of new data assets to users by as much as 70%."

- Data Governance: Enforces role-based access controls, audit trails, and policy rules so data usage complies with both internal standards and regulatory requirements — enabling safe access at scale, not restricting it.

- Data Quality Controls: Applies validation rules, anomaly detection, and verification workflows so insights are built on accurate, consistent data rather than corrupted or outdated records.

- AI/ML Analysis and Discovery: Lets users ask questions in plain English and receive validated answers — no SQL or technical expertise required.

Data Intelligence vs. Data Management

The distinction matters practically. Data management handles logistics: how data is collected, stored, transformed, and moved. It answers questions like "Where is this data stored?" and "How do we move it from system A to system B?"

Data intelligence answers a different set of questions: "What does this data tell us?" and "How should this inform our decision?" Where data management is infrastructure, data intelligence is the analytical layer that turns that infrastructure into a competitive advantage.

| Data Management | Data Intelligence | |

|---|---|---|

| Core function | Collect, store, move | Analyze, interpret, surface |

| Primary question | Where is the data? | What does the data mean? |

| Output | Pipelines and warehouses | Decisions and insights |

| User | Data engineers | Data teams and business users |

The Shift from Traditional BI to AI-Driven Analytics

Traditional business intelligence created a fundamental bottleneck that still paralyzes most organizations. The model was straightforward: data analysts built SQL queries, designed dashboards, and generated reports for specific business questions. When new questions arose, business users submitted tickets and waited.

The Analyst Bottleneck

This model worked when data questions were predictable and infrequent. It breaks down when every department needs data-driven answers daily. Forrester research reveals the scale of the problem: only 20% of enterprise decision-makers who should be using analytical applications actually do so. The other 80% still rely on that 20% for data sourcing, building metrics, running analytics, and delivering insights.

Data teams spend 70% of their time preparing data for analysis rather than analyzing it. Business decisions stack up in queues. By the time insights arrive, market conditions have often shifted.

What Changed: AI as the Analyst

The emergence of large language models and natural language processing changed what's possible. Instead of translating business questions into SQL manually, AI can interpret plain-English queries, explore relevant data sources, validate assumptions, and return accurate answers—all in seconds.

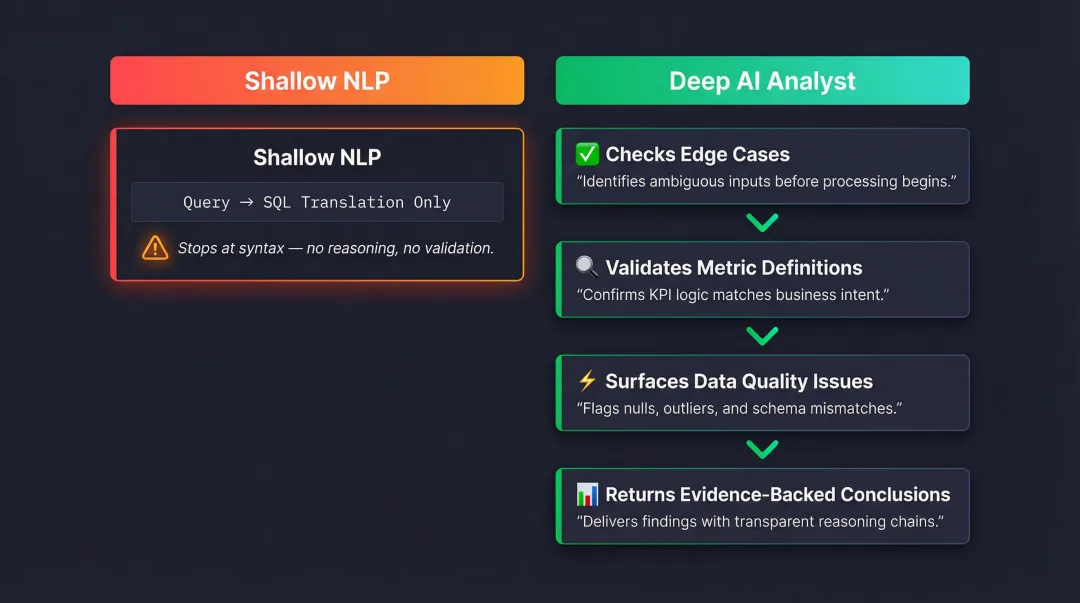

Not all implementations are equal. Adding a natural language layer on top of traditional BI tools—where the AI simply translates "show me revenue" into SELECT SUM(revenue) FROM sales—doesn't solve the bottleneck. It automates the translation, not the thinking.

A genuine AI analyst works the way a skilled human analyst would:

- Checks for edge cases before committing to an answer

- Validates that metric definitions match business intent

- Surfaces data quality issues that would skew results

- Returns only conclusions it can defend with evidence

This difference matters enormously for accuracy and trust.

Data Democratization at Scale

When implemented correctly, AI-driven analytics delivers what self-service BI promised but rarely achieved: the ability for business users, product managers, and executives to get analyst-quality insights without analyst intervention. McKinsey's 2024 survey shows this shift is accelerating, with 72% of organizations now using AI in at least one business function.

The competitive stakes are real. MIT Sloan research found that companies in the top quartile of "real-time-ness" achieved more than 50% higher revenue growth and net margins compared to those in the bottom quartile.

Core Capabilities of a Modern AI-Driven Data Intelligence Platform

Not all platforms claiming AI capabilities deliver genuine intelligence. Here's what separates marketing from substance:

Natural Language Querying and AI-Generated Answers

Strong implementations let users ask questions conversationally—"Who are my top customers by average order value?" or "Show me sales by rep for the last quarter"—and receive accurate, contextual answers. The AI should handle query construction, data exploration, and interpretation automatically.

What distinguishes depth from shallow NLP:

- Shallow: Translates questions to SQL and returns whatever the query produces, regardless of whether it answers the actual question

- Deep: Validates that the query addresses the user's intent, checks for data quality issues, explores edge cases, and explains its methodology

The platform should ground analysis in documented business definitions (like dbt models) rather than guessing from raw table structures. When ambiguity exists, it should ask clarifying questions rather than making assumptions.

Dashboard and Visualization Generation

A strong platform generates entire dashboards from plain-English prompts—"Create a sales performance dashboard for the last 30 days"—without requiring users to manually select chart types, configure axes, or write calculations. Each generated dashboard should be:

- Shareable via links, email, or embedded directly in websites and products

- Customizable through natural language ("make this chart Christmas-themed" or "show this as a bar graph instead")

- Collaborative, with commenting, verification workflows, and version control

The AI should auto-select appropriate visualizations based on data structure, but allow users to override those choices conversationally.

Data Governance and Access Controls

Democratization without governance creates chaos. Look for platforms that enforce:

- Role-based permissions controlling who can access which data sources and metrics

- Audit trails tracking who queried what data and when

- Governed semantic layers that ground AI outputs in verified business definitions

Sylus grounds all analysis in dbt models and documentation. When the AI answers "What is our revenue?" it uses the organization's official definition, not a guess derived from column names. That governed context prevents a common failure mode: technically correct SQL results that are semantically wrong for the business.

Collaboration and Verification Features

Data intelligence isn't a solo activity. Teams need to verify metrics, annotate findings, and share analysis across technical and non-technical stakeholders. Look for:

- Metric verification workflows where team members can request review before finalizing reports

- Real-time collaboration with comments and multiplayer editing

- Collections that let teams curate approved analyses for different audiences — board KPIs, sales assets, product metrics

Over time, these capabilities shift analytics from one-off queries into a shared, institutional asset the whole organization can build on.

Alerting, Scheduling, and Proactive Delivery

Reactive analytics—where users must remember to check dashboards—misses opportunities. The shift to proactive intelligence means:

- Spike alerts notify teams when metrics exceed thresholds or show unusual patterns

- Scheduled reports deliver AI-generated summaries to email or Slack automatically

- Conversational access lets users query data directly from Slack or Teams, embedding analytics into existing workflows

This proactive approach means insights arrive when they matter, not when someone remembers to look for them.

Key Benefits for Data Teams and Business Users

The shift to AI-driven data intelligence delivers measurable improvements across organizations:

Faster Time-to-Insight

Data teams reclaim the majority of their time currently lost to repetitive requests. Business users get answers in seconds rather than waiting days for a ticket to resolve. That speed changes behavior — faster answers lead to more questions, deeper exploration, and sharper decisions.

The time savings are substantial. When analysts spend 80% of their time on data preparation, any reduction in that burden creates massive capacity for strategic work.

Reduced Dependency Bottlenecks and Broader Data Access

When any team member can safely query data through governed interfaces, organizations eliminate the analyst-as-gatekeeper model. The 80% of decision-makers currently dependent on the 20% of technical users gain independence without sacrificing accuracy.

Self-serve access only scales safely with proper governance. Platforms that ground AI in validated business definitions — like dbt models — keep self-service from producing inconsistent or inaccurate results across teams.

Improved Data Trust and Decision Quality

AI platforms that validate assumptions and surface data lineage build user confidence in the answers they act on. When users understand not just the answer, but how the platform reached it, they trust insights enough to base decisions on them.

The cost of poor data quality is well-documented:

- Gartner research puts the average annual cost at $12.9 million per organization

- MIT Sloan found companies lose 15-25% of revenue annually due to poor data quality

Platforms that improve data trust reduce these losses directly — by ensuring every answer is grounded in validated, governed context.

Why Governed Context Is the Foundation of Trustworthy AI Analytics

AI is only as reliable as the context it operates within. Without governance, even sophisticated language models can confidently generate wrong answers.

The Core Problem with Naive AI Analytics

When an AI model queries raw database tables without understanding business definitions, it can return technically accurate SQL results that are semantically incorrect. It might calculate "revenue" by summing an amount column—but if that column includes refunds, returns, or pending transactions, the result is wrong despite the query executing without errors.

The AI doesn't know that users.created_at should filter test accounts, that the orders table has a newer replacement, or that "active customer" carries a specific definition documented in your dbt models. Without that context, it guesses—and guesses incorrectly.

What Is Governed Context?

Instead of letting AI infer meaning from table and column names, governed context anchors every query to the semantic layer your data team has already validated—documented, agreed-upon business definitions that don't shift between analysts.

For organizations using dbt (data build tool), this means the AI operates on dbt models and respects dbt documentation. According to dbt Labs, "The dbt Semantic Layer, powered by MetricFlow, simplifies the process of defining and using critical business metrics... By centralizing metric definitions, data teams can ensure consistent self-service access to these metrics in downstream data tools and applications."

When the platform knows which metrics are canonical, which columns are deprecated, and what each KPI means to the business, it returns answers that pass a data team's review—not surface-level queries that happen to run without errors.

How Governed Context Improves AI Output Quality

Without governed context, AI analytics produces answers that look correct but can't survive a data team's review. Gartner predicts that "By 2030, universal semantic layers will be treated as critical infrastructure, alongside data platforms and cybersecurity... It is the only way to improve accuracy, manage costs, substantially cut AI debt, align multiagent systems, and stop costly inconsistencies before they spread."

The practical benefits include:

- Consistent definitions across all analyses—no more "dueling dashboards" where different departments calculate the same metric differently

- Validated assumptions where AI queries pre-joined, verified metrics instead of guessing table relationships

- Audit trails showing exactly which business definitions informed each answer

- Reduced hallucination risk because the AI operates on structured, documented data models rather than raw tables

Sylus: Built on Governed Context

Sylus grounds all analysis in dbt models and dbt documentation, operating as an AI data analyst that validates assumptions before returning results. Every answer—whether queried in plain English or directly from Slack—is anchored to the organization's validated semantic layer.

Business users get conversational interfaces and instant answers. Data teams keep governance through the dbt models that define what those answers mean. For enterprise deployments, Sylus also provides:

- SOC 2 Type II and HIPAA compliance for regulated industries

- Self-hosted deployment for organizations with strict data residency requirements

- No model training on customer data—neither Sylus nor its model partners use your data to train AI models

How to Evaluate and Choose a Data Intelligence Platform

Not all platforms deliver on their promises. Here's how to separate substance from marketing:

Assess Fit for Your Data Team's Maturity and User Base

A platform built only for technical users will exclude the business stakeholders who need data most. Look for natural language interfaces, self-serve dashboards, and collaboration features that bring in non-technical users without bypassing governance.

The real challenge is balance. Platforms that strip governance for the sake of simplicity create data risk. The right choice lets business users ask questions freely while ensuring every answer respects documented business definitions and access controls.

Evaluate AI Depth, Not Just AI Marketing

Ask vendors specific questions that reveal whether their AI validates assumptions or just translates queries:

- How does it handle ambiguous questions? Does it ask for clarification, or guess and move on?

- How does it ground answers in business definitions? Look for semantic layer support — dbt integration, not raw table queries.

- Can it explain its reasoning? Methodology and assumptions should be surfaced, not buried.

- What happens when data quality is poor? Detection and flagging matter. Silent incorrect results are worse than no result.

Shallow NLP layers will fail these questions. Sylus approaches this differently: its AI analyst explores data thoroughly, validates assumptions at each step, and only surfaces results it can defend — not just the first plausible answer.

Check Security, Compliance, and Deployment Options

For data teams handling sensitive or regulated data, security isn't optional. Verify:

- Compliance certifications: SOC 2 Type II and HIPAA compliance demonstrate operational rigor. Vanta's research shows that 71% of organizations with partial security maturity have attained SOC 2, making it foundational for enterprise trust.

- Model training policies: Does the vendor train AI models on your data? Explicit policies protecting intellectual property matter. Sylus, for example, commits that neither the platform nor its model partners train on customer data.

- Deployment flexibility: IDC's 2024 Cloud Pulse survey reveals that "between 50% and 70% of cloud buyers want the ability to control where their data resides." For regulated industries, self-hosted deployment in air-gapped environments is often required.

Run through this checklist before signing any vendor contract — these gaps surface after deployment, not before.

Frequently Asked Questions

What is a data intelligence platform?

A data intelligence platform is an integrated system using AI and machine learning to consolidate data from multiple sources, surface actionable insights, and help organizations understand how their data is produced and used. Unlike traditional storage or reporting tools, it actively interprets data context and meaning to generate strategic intelligence.

How is a data intelligence platform different from a BI tool?

BI tools analyze structured historical data and generate pre-built reports—typically requiring SQL expertise and analyst involvement. Data intelligence platforms go further: they handle structured and unstructured data, support plain-English querying, and deliver real-time or predictive insights that business users can access directly.

What AI capabilities should a data intelligence platform have?

Key capabilities to evaluate:

- Natural language querying that interprets plain-English questions

- AI-generated dashboards and visualizations

- Automated assumption validation before returning answers

- Anomaly detection and alerting for data changes

- Governed context that grounds outputs in validated business definitions (like dbt models), not raw table guesswork

How does a data intelligence platform handle data governance and security?

Modern platforms enforce role-based access controls, maintain audit trails of data usage, and implement organizational governance policies. Leading platforms offer SOC 2 Type II and HIPAA compliance, self-hosted deployment options for data residency control, and explicit commitments not to train AI models on customer data.

Can non-technical users benefit from a data intelligence platform?

Yes. Business users can ask questions in plain English, generate dashboards, and receive verified answers without writing SQL or waiting on an analyst. Governed context keeps this accessibility accurate and compliant, so self-serve analytics scales safely across the organization.

What is governed context in AI-driven analytics?

Governed context means grounding AI analysis in validated, documented business definitions—such as dbt models and semantic layers. This ensures answers align with how the organization actually defines its metrics. Without it, AI can return results that are technically correct but semantically wrong—pulling from raw table names rather than real business logic.